This confused me for a while when I first learned it, so in case it helps anyone else:

The standard logistic sigmoid function, a.k.a. the inverse logit function, is

Its outputs range from 0 to 1, and are often interpreted as probabilities (in, say, logistic regression).

The tanh function, a.k.a. hyperbolic tangent function, is a rescaling of the logistic sigmoid, such that its outputs range from -1 to 1. (There’s horizontal stretching as well.)

It’s easy to show the above leads to the standard definition . The (-1,+1) output range tends to be more convenient for neural networks, so tanh functions show up there a lot.

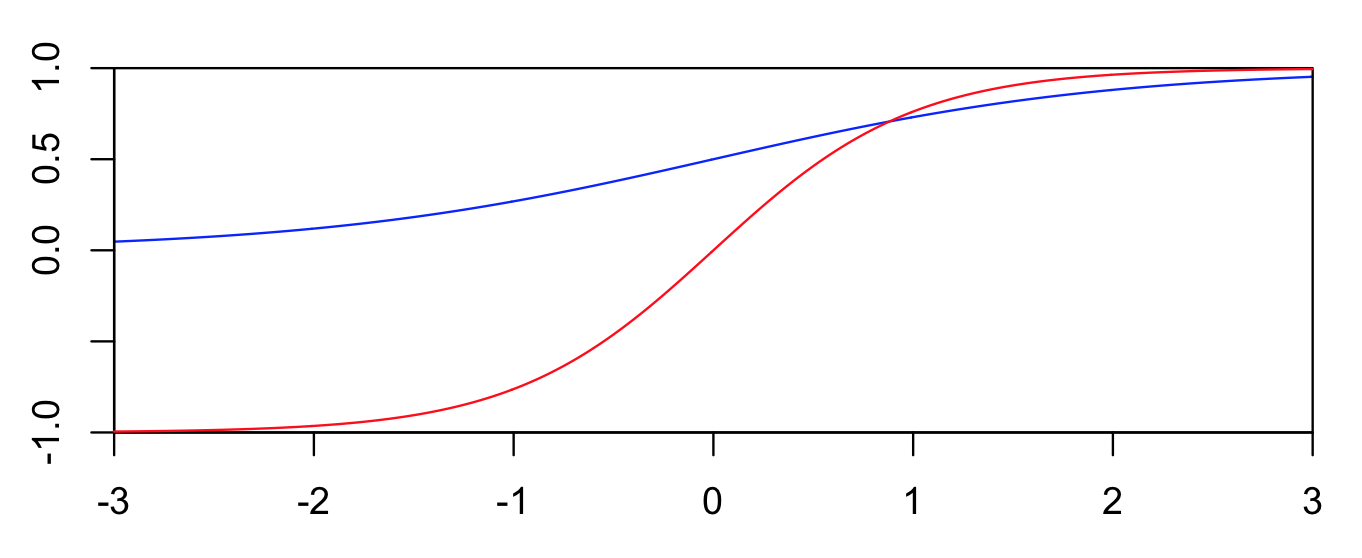

The two functions are plotted below. Blue is the logistic function, and red is tanh.